Call Agent AI: Privacy Risks by Industry

Court rulings now classify AI call systems as third-party wiretappers. Learn which industries face the biggest risks and how to stay compliant.

Written by

Adam Stewart

Key Points

- Get all-party consent before AI monitors calls in real-time

- Train staff to avoid protected health info in background conversations

- Review vendor contracts for machine learning data use clauses

- Implement voice data encryption at point of collection

AI call agents are reshaping industries but come with serious privacy risks. These systems record and analyze calls, extracting sensitive data like health details, financial information, and biometric patterns. Key concerns include data breaches, regulatory violations, and misuse of voice data for profiling or fraud. Industries like healthcare, legal, and financial services face unique challenges due to strict privacy laws, including HIPAA, GDPR, and CCPA. Businesses must adopt strict safeguards, such as encryption, explicit consent, and automated redaction, to mitigate risks and comply with regulations.

Key Points:

- AI call agents record and analyze calls, capturing sensitive personal and biometric data.

- Major privacy risks include data leaks, profiling, and regulatory non-compliance.

- Industries like healthcare, legal, and finance face strict privacy rules (e.g., HIPAA, GDPR).

- Solutions include encryption, role-based access, explicit consent, and data retention policies.

Protecting customer privacy while using AI requires balancing efficiency with compliance and transparency.

Healthcare Industry Privacy Risks

Healthcare organizations face a unique set of challenges when implementing AI call agents. These systems don't just handle patient conversations - they can also capture biometric signals, background sounds, and other highly sensitive health information. Even a small lapse in safeguarding this data can result in HIPAA violations and steep financial penalties.

Accidental Recording of Protected Health Information

AI systems with sensitive activation triggers may unintentionally record conversations. Pre-call small talk, background voices, or post-call remarks could include protected health information (PHI) that gets captured and stored without proper consent [3]. Unlike human operators, who can exercise discretion about what to document, AI systems often record everything within their activation window.

The legal risks here are significant. In February 2025, the Northern District of California ruled in Ambriz v. Google, LLC that Google's Cloud Contact Center AI (GCCCAI) could be classified as a third-party wiretapper under the California Invasion of Privacy Act. The court's reasoning was that the AI system's ability to use customer service call data for improving its machine learning models made it more than just a tool - it was seen as an independent interceptor [6].

"The mere potential for a service provider to exploit intercepted data for its own benefit is sufficient to classify it as a third party under CIPA."

– Northern District of California Court [6]

To address these risks, healthcare providers must sign Business Associate Agreements (BAAs) with their AI vendors to clearly outline data security responsibilities [2]. Additionally, automated redaction tools can help by removing sensitive details - like account numbers or specific medical conditions - from audio transcripts [3]. This legal precedent highlights broader concerns, including the detection of medical conditions without patient consent, discussed next.

Medical Condition Detection Through Voice Analysis

AI technology doesn't just record conversations - it can also analyze voice patterns to infer health conditions. For instance, speech biomarkers can reveal neurological conditions like Parkinson's disease or depression, even if the patient hasn't disclosed these issues [3]. The AI examines elements like pitch, rhythm, tone, and pauses, capturing health insights that patients may not intend to share.

While patients might consent to call recordings, they may not realize the AI is also detecting subtle speech markers linked to their health. This goes far beyond traditional call recordings, which typically involve only audio and basic metadata [3]. Voice data, however, contains involuntary signals that reveal deeply personal information.

"Voice data can include biometric and emotional signals that are uniquely sensitive and can reveal far more about individuals than they consciously share."

– Aircall [3]

To minimize these risks, healthcare organizations should disable features like voiceprint creation, sentiment analysis, or emotion scoring unless they have a clear legal justification and explicit patient consent [7].

Patient Call Monitoring and Privacy Violations

Real-time AI monitoring adds another layer of privacy concerns. When an AI "listens" to a live call, it constitutes interception. Privacy obligations begin the moment the AI accesses the call, not when the data is stored. This distinction is crucial, as some healthcare providers mistakenly believe that avoiding data storage exempts them from consent requirements.

"Storage is not the trigger - access is. Real‑time analysis without storage still counts as processing and monitoring."

– Burr & Forman LLP [7]

In jurisdictions requiring all-party consent, healthcare organizations must obtain recorded consent from every participant before enabling AI monitoring. Disclosures should explicitly state that automated tools and third-party providers are involved in the analysis. Contracts with AI vendors must also prohibit the use of call data for independent model training or product development [7]. To ensure compliance, providers should maintain detailed audit logs of AI activities and conduct regular privacy and security considerations and risk assessments [2].

Regulators are closely watching how health data is handled. For example, in January 2023, the FTC filed a complaint against GoodRx Holdings Inc. for failing to notify over 55 million users that their health information had been shared with platforms like Facebook and Google for advertising purposes [8]. Similarly, in March 2023, the FTC targeted BetterHelp Inc. for sharing sensitive health data with third parties like Snapchat and Pinterest [8]. These cases highlight the growing regulatory focus on unauthorized sharing of health information. Balancing AI innovation with strict privacy rules is essential for healthcare organizations navigating these risks.

sbb-itb-ef0082b

Legal Industry Privacy Risks

Law firms face unique challenges in protecting privacy when using AI call agents. One critical concern is maintaining attorney-client privilege - a cornerstone of legal practice. If confidential conversations are intercepted or mishandled, it can jeopardize sensitive case details, client identities, and privileged communications. Even a simple misconfiguration in an AI system could lead to unintended exposure of this information to third parties.

Attorney-Client Privilege in Recorded Calls

AI call systems record and store every conversation, creating a digital trail that could pose risks if not properly managed. When these recordings or transcripts are stored in the cloud or processed by external vendors, the potential for unauthorized access grows. Courts have increasingly applied a capability test to assess liability. This means that if an AI provider has the technical ability to use call data - whether or not they actually do - they might be classified as a third-party interceptor. Recent rulings in California highlight this, emphasizing that the possibility of data misuse can be enough to establish third-party status under privacy laws [4][6].

"The mere potential for a service provider to exploit intercepted data for its own benefit is sufficient to classify it as a third party under CIPA."

– Mark David McPherson, Partner, Goodwin Procter LLP [6]

AI systems can also inadvertently record background conversations, capturing sensitive details that were never intended for inclusion [3]. To address these risks, law firms should enforce strict confidentiality agreements with AI vendors, ensuring client data is not used for training models or shared with third parties [9]. Automated redaction tools can further protect sensitive information by removing details like case numbers or client names from transcripts before storage [3]. These measures are essential as firms navigate complex privacy regulations both in the U.S. and abroad.

International Data Handling Compliance

The global nature of legal work adds another layer of complexity. For instance, a New York law firm handling a case involving European clients must comply with both U.S. state laws and the EU General Data Protection Regulation (GDPR), which has far-reaching implications [5]. Under GDPR, voice data is considered biometric data, requiring explicit consent and heightened security measures. Similarly, Illinois' Biometric Information Privacy Act (BIPA) imposes strict rules on handling biometric data like voiceprints [5].

Privacy laws differ significantly by jurisdiction. While federal U.S. law generally permits one-party consent for call recording, states like California, Florida, and Pennsylvania require all-party consent [7]. GDPR adds another layer by mandating explicit consent for processing biometric data and restricting cross-border data transfers. Recent enforcement actions have underscored the challenges of compliance for organizations leveraging AI voice analytics.

"Voice data isn't limited to what a customer says; it also includes how they say it. AI systems can extract biometric and emotional signals... to infer identity, ethnicity, mood, intent, and even health status."

– Aircall [3]

To navigate these complexities, law firms should configure AI systems with geolocation controls that automatically enforce the strictest privacy standards based on the caller's location [7]. Contracts with AI providers must explicitly forbid using client data for model training or other independent purposes. Additionally, firms should secure all-party consent before implementing AI monitoring [7].

Client Data Analysis and Misuse

AI systems do more than record calls - they can analyze them in ways that pose further risks to confidentiality. For example, these systems might assess speech patterns to gauge emotional states, potentially exposing a client’s stress level or negotiation strategy. This kind of analysis could jeopardize legal strategies if accessed by opposing parties. AI systems also create voiceprints that uniquely identify individuals, and under laws like Illinois' BIPA, collecting such data without explicit written consent can result in penalties of $1,000 to $5,000 per violation [5]. Sharing this metadata with third parties for marketing or profiling could breach attorney-client privilege and compromise sensitive consultations.

To mitigate these risks, law firms should disable non-essential AI features, such as emotional analysis, demographic profiling, or voiceprint creation, unless there is a clear legal need and explicit client consent [7]. Adopting privacy-by-design principles ensures that only essential information is collected [9]. Implementing strict data retention policies with automated deletion schedules further reduces the risks tied to long-term storage [5].

Financial Services Privacy Risks

The financial services sector, much like healthcare and legal industries, faces its own set of privacy challenges that require tailored security strategies. Banks, in particular, encounter significant risks when using AI call agents to handle sensitive information such as account numbers, balances, and payment details. According to a 2024 Deloitte survey, 40% of professionals identified data privacy as their top concern when it comes to AI systems [3][4][5].

Financial Data Exposure During Calls

Courts are increasingly treating AI vendors as "intercepting third parties" rather than neutral tools, which has significant legal implications. For instance, in August 2025, the U.S. District Court for the Northern District of California ruled in Taylor v. ConverseNow Technologies, Inc. that a class action lawsuit could proceed because the vendor had the technical ability to use customer data for product development. This decision established potential liability under the California Invasion of Privacy Act (CIPA), with statutory damages starting at $5,000 per violation [4].

AI systems don’t just handle direct financial data; they also capture incidental information like background conversations and emotional cues, which could hint at a customer’s financial struggles [3][5]. These risks are compounded by the growing threat of voice cloning. To mitigate these dangers, banks should adopt automated tools to redact sensitive financial data and ensure their contracts with AI vendors explicitly forbid data usage beyond the agreed-upon scope of service [7].

Voice Cloning and Identity Fraud

AI deepfake technology has made it possible to replicate a person’s voice with just a few seconds of audio [3]. If voice samples are stolen or stored without proper security, they can be exploited to bypass biometric authentication, creating a permanent security risk. AI systems analyze elements such as pitch, tone, and rhythm to generate unique voiceprints, which, if leaked, could lead to unauthorized access and fraud [3].

"If voice samples are stolen or stored insecurely, they can be weaponized to bypass authentication or gain unauthorized access to account information."

– Aircall [3]

Regulators are taking this issue seriously. In Hungary, for example, the Data Protection Authority fined a bank nearly €700,000 for using AI voice analytics without proper consent or adequate safeguards [5]. In the U.S., Illinois' Biometric Information Privacy Act (BIPA) imposes fines of $1,000 to $5,000 per violation for collecting voiceprints without explicit consent [5]. Financial institutions can reduce these risks by implementing multi-factor authentication (MFA), avoiding sole reliance on voice biometrics for high-risk transactions, encrypting voice data with standards like AES-256, and refraining from creating or storing biometric voiceprints unless absolutely necessary [3][5][7].

Financial Profiling for Marketing

AI call agents often analyze speech patterns to infer details such as race, gender, ethnicity, emotional state, or health conditions [3][5][10]. While this capability can be useful, it also opens the door to misuse, such as discriminatory practices in credit or insurance decisions or unethical targeted marketing. For example, an AI system might incorrectly label a customer as "high-risk" based on vocal stress during a loan application [5][10].

To address these risks, financial institutions should disable features like sentiment analysis, demographic profiling, and emotion detection unless there is a clear business justification and explicit customer consent [7]. Adopting privacy-focused principles ensures that only essential data is collected and retained. Automated deletion schedules and strict data retention policies further minimize risks tied to long-term data storage [3][5]. Additionally, compliance with regulations such as the Gramm-Leach-Bliley Act (GLBA), GDPR, and CCPA is critical to maintaining customer trust and avoiding legal penalties [3][11].

How to Reduce AI Call Agent Privacy Risks

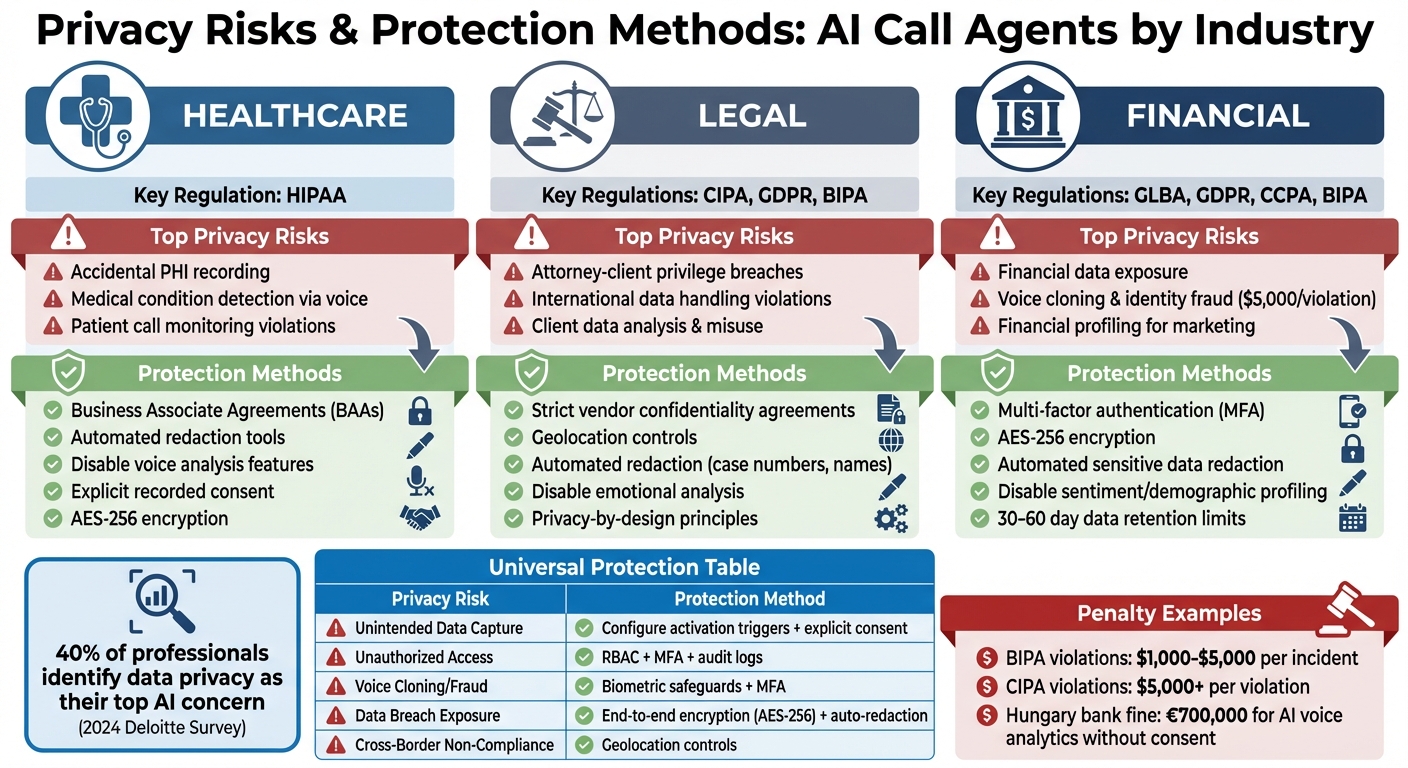

AI Call Agent Privacy Risks and Protection Methods by Industry

Data Security Best Practices

Protecting customer privacy starts with robust technical measures to guard against unauthorized access and data breaches. For data in transit, use TLS/SRTP, and for data at rest, rely on AES-256 encryption [3]. Additionally, automated redaction tools should remove sensitive details - like account numbers, Social Security numbers, or health information - from call transcripts before they are stored or used for AI training [3].

To control access, implement role-based access control (RBAC) so only authorized personnel can view recordings. Pair this with multi-factor authentication and maintain detailed audit logs to track who accesses data [3]. Enforce strict data retention policies to automatically delete or anonymize call data within 30–60 days, unless legal requirements dictate otherwise. This limits the exposure window in case of a breach [12].

Avoid generating unnecessary voiceprints to reduce regulatory risks under laws such as Illinois' Biometric Information Privacy Act (BIPA), which imposes fines ranging from $1,000 to $5,000 per violation [5]. Also, configure AI activation triggers carefully to avoid capturing unintended background conversations [3].

By following these practices, you create a secure foundation to address the unique regulatory needs of different industries.

Industry-Specific Protection Methods

Every industry faces its own challenges when it comes to safeguarding privacy. Tailor your technical strategies to meet these specific requirements.

Healthcare Providers

Healthcare organizations must ensure compliance with HIPAA by encrypting all protected health information (PHI) and obtaining explicit patient consent before recording calls [3][12]. For high-risk features like emotional analysis or biometric inference, rely on explicit, recorded consent rather than a "legitimate interest" framework [5][12].

Legal Firms

Law firms should use geolocation controls to keep client data within required jurisdictions, especially in international cases [3]. This is crucial for maintaining confidentiality and adhering to jurisdictional rules. Automated deletion schedules for attorney-client communications are essential, along with ensuring AI vendors do not access call content for their own purposes. Cases like Taylor v. ConverseNow Technologies highlight the importance of these safeguards [4].

Financial Institutions

For financial organizations, multi-factor authentication (MFA) should go beyond voice biometrics for high-risk activities like wire transfers or account changes [3]. Regular audits of AI systems are also necessary to identify and address potential biases, such as those related to accents, age, or vocal stress patterns [5].

Privacy Risks and Protection Methods Compared

Here’s a quick breakdown of common privacy risks and their corresponding safeguards:

| Privacy Risk | Technical/Procedural Protection Method |

|---|---|

| Unintended Data Capture | Configure sensitive activation triggers and obtain explicit user consent [3]. |

| Unauthorized Data Access | Use role-based access control (RBAC), multi-factor authentication, and audit logs [3]. |

| Voice Cloning/Fraud | Deploy biometric safeguards and enforce multi-factor authentication (MFA) [3]. |

| Data Exposure in Breach | Apply end-to-end encryption (AES-256) and redact sensitive information automatically [3]. |

| Cross-Border Non-Compliance | Implement geolocation controls to ensure data stays within approved jurisdictions [3]. |

Always start calls with a clear audio disclaimer informing users that an AI virtual receptionist is handling the interaction and whether the call is being recorded [12]. For sensitive applications like emotional analysis or biometric identification, ensure explicit, recorded consent is obtained [5][12]. Regularly evaluate third-party AI vendors to confirm they include contract clauses that limit data processing and comply with international transfer agreements [12].

Conclusion: Managing Privacy While Using AI

Privacy-Focused AI Phone Systems

AI call agents can offer both efficiency and privacy protection when built with strong safeguards. The concept of "privacy-by-design" involves integrating compliance measures directly into the system's architecture rather than treating them as an afterthought. This includes features like default settings that limit data collection, robust encryption protocols, and automated tools to redact sensitive information from transcripts before storage [3].

For example, Dialzara incorporates these safeguards to comply with strict regulations in sectors like healthcare, legal, and financial services. The system is quick to deploy and adaptable to specific industry requirements, such as HIPAA compliance in healthcare or maintaining attorney-client privilege in legal practices.

These technical measures provide a solid foundation, but operational practices are equally important for ensuring privacy and trust.

Main Points for Business Owners

For business owners adopting AI call agents, transparency and consent should be top priorities. Always inform customers at the beginning of a call that AI is being used, and obtain explicit consent before recording or processing biometric data [13]. With 40% of professionals identifying data privacy as a major concern in AI implementation [3], clear communication is critical for building trust.

Vendor contracts should clearly restrict data usage to the agreed purposes. Courts are increasingly applying a "capability test", where companies can be held liable if their technology has the potential to misuse data, even if no misuse occurs [4][6]. Additionally, enforce strict data retention policies that automatically delete recordings once they’re no longer legally required.

A human-in-the-loop approach is essential to maintain quality and customer satisfaction. Ensure AI interactions include clear escalation paths to live agents to handle complex issues or prevent customer frustration [1]. Combining these operational strategies with robust technical safeguards enables businesses to use AI call agents effectively while prioritizing customer privacy across various industries.

FAQs

What consent is required before using an AI call agent?

To make outbound calls, you must first secure prior express consent from the person you’re contacting. Beyond that, it’s crucial to clearly disclose the use of AI during the interaction. This step is essential to align with both federal and state regulations. Being upfront about AI involvement not only ensures compliance but also helps mitigate potential legal issues.

Can AI voice analysis reveal health or biometric data?

AI voice analysis has the ability to uncover health and biometric data. Researchers are exploring how voice and speech patterns can help identify vocal biomarkers - specific traits in a person’s voice that might signal underlying health conditions. This technology shows promise in areas like monitoring and diagnosing a range of medical issues, offering a non-invasive way to gather critical health insights.

How should businesses set retention and deletion for call recordings?

Businesses need to create clear call recording retention and deletion policies tailored to legal requirements, industry guidelines, and recommended practices. To ensure compliance and security, consider automating these processes with tools like encryption, strict access controls, and routine audits.

Summarize with AI

Related Posts

Top 7 AI Risks in Healthcare and How to Address Them

Explore the top AI risks in healthcare, from governance gaps to bias, and discover effective strategies for ensuring patient safety and compliance.

HIPAA Compliant AI Voice Agent: Security & Compliance Guide for Healthcare

Learn how to ensure HIPAA compliance and secure patient data when using AI phone agents in healthcare. Explore key guidelines, challenges, and ethical considerations.

HIPAA-Compliant AI Phone Agents for Healthcare

Explore how HIPAA-compliant AI phone agents enhance patient communication, ensuring security and efficiency in healthcare interactions.

AI Privacy Risks: 7 Challenges & Solutions

Discover the key AI privacy risks, solutions, and current state of AI in customer service. Learn how to address privacy challenges and protect customer data.