How AI Detects Scam Calls and Messages

AI blocks 99% of scam calls in 250 milliseconds using voice analysis and behavioral tracking - 40% faster than traditional methods.

Written by

Adam Stewart

Key Points

- Block scams 40% faster with real-time analysis vs outdated blacklists

- Spot deepfake voices better than humans (AI: 99% vs humans: 60%)

- Stop scams in 250ms with voice pattern detection technology

- Block 99% of scams while keeping 98% of real calls connected

AI is revolutionizing how we tackle scam calls and messages. With billions of unwanted calls and messages received monthly in the U.S., traditional methods like blacklists and Do Not Call registries are no longer enough. Scammers now use advanced tactics like AI voice-cloning, caller ID spoofing, and mass auto-dialing, making detection harder for humans.

Here’s how AI fights back:

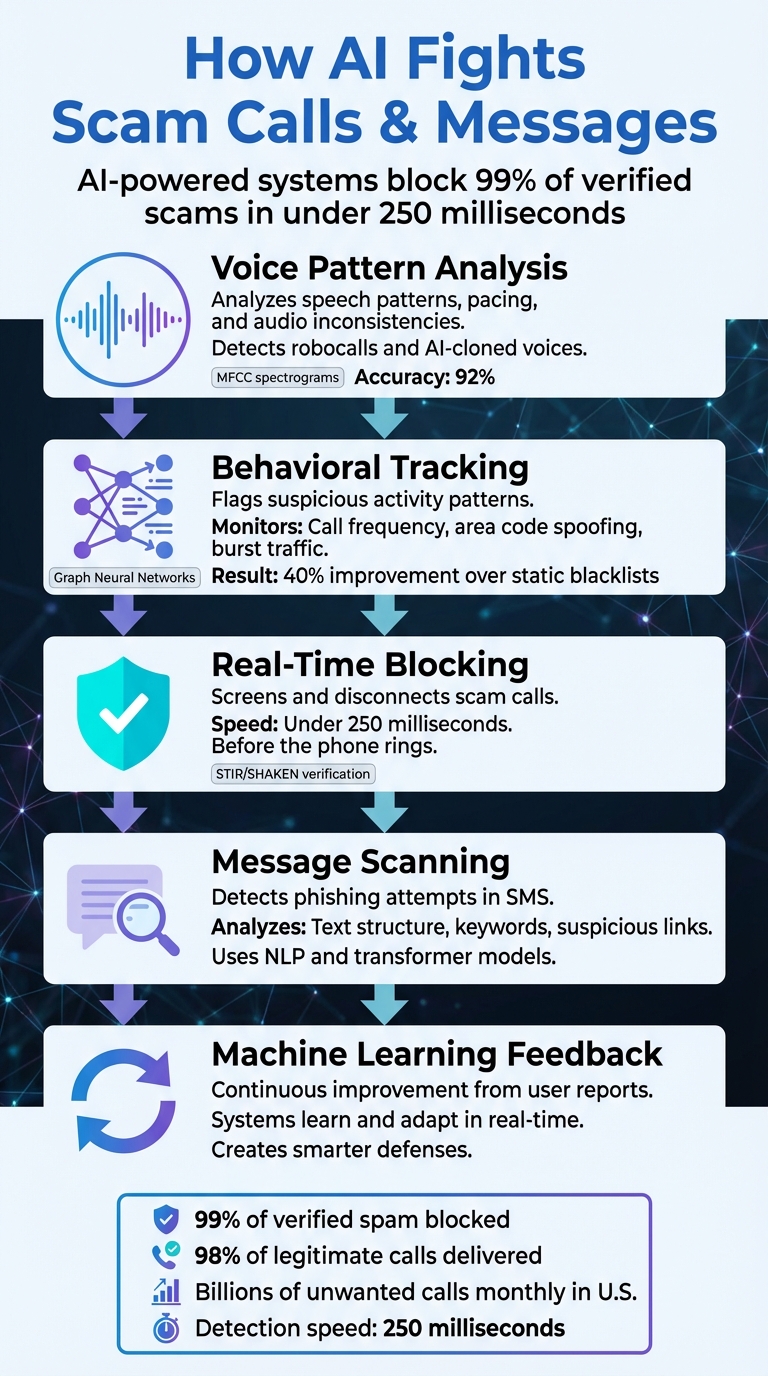

- Voice Pattern Analysis: AI identifies robocalls and deepfake voices by analyzing speech patterns, pacing, and subtle inconsistencies in audio.

- Behavioral Tracking: It flags suspicious activity, like numbers making thousands of calls in a short period or using area code spoofing.

- Real-Time Blocking: AI screens and disconnects scam calls in milliseconds, often before you even pick up.

- Message Scanning: AI detects phishing attempts by analyzing text structure, keywords, and links for scam indicators.

- Machine Learning Feedback: Systems improve continuously by learning from flagged spam and user input.

AI-powered tools now block 99% of verified scams while ensuring legitimate communications are not disrupted. Businesses are also leveraging AI for call screening and customer protection, integrating these systems into their operations to prevent fraud and safeguard users.

How AI Detects and Blocks Scam Calls in Real-Time

How AI Detects Scam Calls

AI systems tackle scam calls by diving deep into multiple data layers, analyzing everything from the audio itself to the patterns in how calls are placed.

Voice Pattern Recognition and Anomaly Detection

AI can pick up on the telltale signs of synthetic voices. Using tools like Automatic Speech Recognition (ASR) and Natural Language Processing (NLP), these systems scan the first few seconds of a call for robocall indicators - things like a monotone pitch, fixed pacing, or the absence of natural pauses and back-and-forth conversation [1].

Neural networks, such as convolutional and recurrent models, are trained on massive datasets of spam calls. They extract technical details like Mel-frequency cepstral coefficients (MFCC) spectrograms and pair them with transformer-based encoders. This combination achieves an impressive 92% accuracy in spotting robocalls. Even better, these systems operate fast - detecting and disconnecting scam calls in under 250 milliseconds, often before the scammer can finish their opening line [1].

With scammers now using AI to clone voices, deepfake detection has become a key feature. These systems analyze subtle technical inconsistencies - like mismatched breath sounds or irregularities in phase coherence - that humans might overlook. Interestingly, a 2025 study showed that trained humans could only identify AI-cloned voices correctly about 60% of the time, while AI models performed significantly better [1].

But AI’s analysis doesn’t stop at the voice. It also examines caller behavior to further validate its findings.

Behavioral Analysis of Callers

AI goes beyond sound to track patterns in how calls are made. For example, if a previously unknown number suddenly makes 50,000 calls at 9 a.m. on a Monday, that’s a red flag [2].

Behavioral analytics look at signals like:

- Reach and frequency: How many unique numbers does a single source call?

- Call setup and abandonment rates: Auto-dialers often disconnect when they hit voicemail.

- Burst traffic from a single trunk: A signature move of robocall gateways [1] [2].

By moving from static blacklists to real-time machine learning, carriers have improved their scam detection rates by up to 40% [1].

| Signal Pattern | Why It Raises A Flag | Typical Action |

|---|---|---|

| Short call setup time & high abandon rate | Indicates auto-dialers disconnecting on voicemail | Temporary blocking |

| Burst traffic from single trunk | Suggests robocall gateway activity | Carrier-level throttling |

| Number spoofed to recipient's area code | A social-engineering trick to gain trust | Further authentication required |

Real-Time Call Screening and Blocking

AI doesn’t just slap a "Spam Risk" label on calls - it actively screens them before they even reach your phone. Using intent-based screening powered by NLP, these systems analyze the caller’s purpose in real time, allowing only legitimate calls to go through [4].

This process is enhanced with STIR/SHAKEN cryptographic verification, which ensures that the caller ID matches the actual originating number. Combined with live audio analysis, AI can block 99% of verified spam while still ensuring 98% of legitimate calls make it through [1].

For businesses, AI-powered virtual receptionist assistants like Dialzara take things a step further. These agents screen unknown calls, assess the intent behind them, and either handle the inquiry directly or transfer legitimate calls to the right team. This not only shields businesses from scams but also ensures genuine customers aren’t left hanging.

sbb-itb-ef0082b

How AI Detects Scam Messages

AI isn't just about securing phone calls - it’s also a powerful tool for protecting messaging channels. By analyzing textual cues, AI systems can identify and block phishing attempts before they even land in your inbox. These systems rely on advanced techniques to examine the content, structure, and behavior patterns of messages sent through SMS and other messaging apps.

Pattern Recognition in Text and Links

Using Natural Language Processing (NLP), AI scans messages for telltale signs of scams. Phrases like "urgent message", "special offer", or "you've won" are red flags because they’re crafted to provoke quick reactions [3][1]. Behind the scenes, models such as Convolutional Neural Networks (CNNs), Long Short-Term Memory (LSTM) networks, and transformer-based encoders work together to detect subtle spam indicators that might slip past simpler systems [2][1].

These models don’t just focus on individual words; they also assess the structure of the message. This allows them to distinguish between genuine communication and scripted or automated content with 92% accuracy, all while processing messages in under 250 milliseconds [1]. For instance, any text claiming to be from the IRS or Social Security Administration is automatically flagged as suspicious. As Mike Rudolph, Chief Technology Officer at YouMail, explains:

"If you get a call that says it is the [Internal Revenue Service] or the [Social Security Administration], that's unequivocally going to be a fraudster calling you." [2]

This same principle applies to text messages impersonating government agencies, ensuring they’re quickly identified and blocked.

Detecting Hyper-Personalization and Impersonation

Scammers are getting smarter, using generative AI to craft messages that mimic legitimate contacts. In response, AI systems leverage large language models (similar to GPT) to analyze these messages and detect patterns typical of scams, even when the wording appears polished [3].

AI doesn’t stop at the content - it also examines metadata, such as messaging frequency, and uses graph neural networks to uncover coordinated fraud operations [2][1]. These advanced techniques allow AI to stay ahead of scammers, even as their methods evolve.

Spam Filtering with Machine Learning

Once suspicious patterns or impersonation attempts are flagged, AI steps in with machine learning pipelines to filter out spam. These pipelines combine multiple models, such as gradient-boosted decision trees for structured data and neural networks for analyzing message content [1].

What makes these systems even more effective is their ability to learn in real time. When users mark a message as spam, that feedback is instantly fed into the AI, refining its detection capabilities. This approach has proven highly effective; phone carriers report a 40% increase in scam detection rates after transitioning from static blacklists to dynamic, machine-learning-based systems [1]. Essentially, every user contributes to a smarter, faster defense system, reducing the time it takes to block new scam campaigns [1].

Advanced AI Techniques for Scam Detection

As scammers get craftier - using tools like AI-generated voices and organized fraud networks - detection systems have stepped up their game. Modern AI tools now go far beyond basic pattern recognition, leveraging advanced methods to catch even the most convincing scams.

Deepfake Analysis in Voice and Text

Today’s scammers are using neural vocoders - sophisticated text-to-speech tools - to mimic voices of family members, employers, or even celebrities. AI has become better than humans at spotting these synthetic voices. How? By analyzing acoustic artifacts, or tiny imperfections in audio signals that fake voices can't perfectly reproduce.

These systems detect inconsistencies like unnatural or missing breath sounds, irregular pitch, awkward pacing, or mismatched emotional tones. They also use techniques like Fast Fourier Transform (FFT) and Constant-Q Transform (CQT) to turn audio into visual spectrograms. These spectrograms act like "fingerprints" that convolutional neural networks compare against known scam patterns for identification[1][2].

The results are impressive. Transformer-based AI models now achieve 92% precision, with response times under 250 milliseconds. This speed allows systems to disconnect scam calls before the fraudster can even finish their opening line[1]. Mike Rudolph, Chief Technology Officer at YouMail, highlights the importance of such tools:

"If you get a call that says it is the [Internal Revenue Service] or the [Social Security Administration], that's unequivocally going to be a fraudster calling you."[2]

Beyond analyzing voice and text, AI is also mapping out how these scams operate on a larger scale.

Network and Behavioral Pattern Analysis

Scam calls are rarely isolated events - they’re often part of organized, interconnected networks. To uncover these networks, AI leverages Graph Neural Networks (GNNs) to map relationships between fraudsters, revealing coordinated operations rather than just individual scams[1].

Key tools in this process include analyzing call detail records (CDRs), which provide metadata like call duration, routing paths, and unusual network patterns. Behavioral analytics further enhance this approach by tracking metrics like reach (how many unique numbers a caller dials) and frequency (how many calls are made per hour)[1][2].

This shift from static blacklists to dynamic, real-time machine learning pipelines has delivered results. Carriers report up to 40% better scam detection rates using these advanced systems[1]. However, as Brian Podolak, CEO of Vocodia, points out, the industry still faces challenges:

"The data has to be centralized somewhere, in my opinion, for it to be successful. Otherwise, you're going to have what you have right now, which is this fractured environment."[2]

Despite these obstacles, businesses are finding ways to stay ahead. For example, silent interception systems can score and block scam calls within 200 milliseconds - stopping them before the recipient’s phone even rings[1]. When paired with the STIR/SHAKEN framework, which uses cryptographic tokens to verify caller ID authenticity, these layered defenses make it harder than ever for scammers to succeed[1][2].

Integrating AI Scam Detection into Business Systems

Protecting communication channels requires more than just basic caller ID these days. The best defense combines rule-based filters, cryptographic authentication (using STIR/SHAKEN protocols), and real-time AI voice analysis to detect threats effectively[1].

By integrating AI tools with existing business systems like CRMs, help desks, and calendars, businesses can automate caller verification, update records instantly, and route legitimate calls while blocking suspicious ones. This approach not only improves operational efficiency but also strengthens security measures.

Using AI Virtual Agents for Call Screening

AI virtual agents are transforming how businesses handle incoming calls. These agents can answer calls, ask qualifying questions like “Who may I say is calling?” or “What is this in regard to?”, and then decide whether to connect, transfer, or disconnect the call[5].

A great example of this is AT&T’s trial of its AI Digital Receptionist in September 2025. This system uses conversational AI to determine if a caller is human or an AI and assesses the urgency of the request based on specific business criteria. It even provides live transcripts and can terminate suspicious calls before they reach employees[5]. Andy Markus, Chief Data and AI Officer at AT&T, described this shift succinctly:

"Tired of Screening Spam Calls? An AI Digital Receptionist Could Do It for You"[5]

Dialzara showcases another successful implementation of AI-driven call screening. Their AI phone agents handle tasks like screening calls, transferring them, and managing client intake. The system integrates with over 5,000 business apps, operates 24/7, and understands industry-specific terms. Setup is quick - just input basic business details, choose a voice, and configure call forwarding.

For trusted contacts, businesses can create “do not screen” lists to bypass AI checks. Meanwhile, for calls that fall into a gray area, AI can assign a risk badge, allowing employees to proceed with caution[5].

Customizable AI Features for Businesses

Customizable AI features allow businesses to adapt their defenses to meet specific communication needs. Advanced systems let companies set industry-specific guardrails, such as recognizing specialized terminology, maintaining an appropriate tone, and limiting certain topics to ensure the AI operates safely and reliably.

With user-defined white and black lists, businesses can guarantee that crucial communications from trusted partners are never missed while blocking known offenders automatically[1]. Moving from static blacklists to dynamic machine learning pipelines can boost scam detection rates by as much as 40%. Additionally, advanced AI filters can block up to 99% of verified spam calls[1].

The benefits are clear: businesses save time, reduce risk, and ensure their teams focus only on legitimate calls.

Conclusion

Scam calls and messages continue to pose a serious digital threat. As mentioned earlier, Americans receive billions of unwanted calls each month, leading to substantial financial losses per victim. Meanwhile, fraudsters are constantly refining their tactics with tools like AI-cloned voices and rotating phone numbers.

To combat these evolving threats, AI systems have advanced significantly. What started as basic filtering has transformed into highly adaptive defenses. Today’s machine learning models can block about 99% of spam calls while ensuring over 98% of legitimate business calls are delivered. These systems analyze factors like call duration, routing paths, acoustic patterns, and phase coherence to identify threats in just 250 milliseconds [1].

For businesses, AI call screening has become essential. These tools intercept suspicious calls, verify caller identities, and automatically route genuine inquiries, safeguarding both business operations and customer trust.

As scams grow more sophisticated, AI continues to push the boundaries of detection. Techniques like graph neural networks and adaptive learning models are raising the bar for scam prevention [1].

AI-driven business call answering is no longer optional - it’s a critical shield for protecting businesses and their customers.

FAQs

How does AI tell a real person from a robocall or deepfake voice?

AI can identify robocalls and deepfake voices by closely examining specific acoustic and behavioral clues. These include things like unnatural pauses, robotic-sounding tones, or speech patterns that don’t flow naturally. By leveraging voice biometrics and analyzing real-time metadata, it can detect irregularities such as mismatched pitch or unusual background noise. This technology plays a key role in flagging suspicious calls, helping to shield users from scams by pinpointing synthetic or automated voices with impressive accuracy.

Will AI scam blocking accidentally block important calls or texts?

AI-powered scam blocking systems, such as Dialzara, are built to automatically filter out spam and fraudulent calls. While these tools are highly effective, there’s a slight possibility that legitimate calls or texts might get blocked. This can happen if a caller is incorrectly flagged as spam or if the filtering settings aren’t adjusted properly. Taking the time to configure these settings correctly can help reduce the chances of missing important communications.

How can a small business quickly set up AI call screening?

Small businesses can easily implement AI call screening with a tool like Dialzara. The setup is straightforward: create an account, configure qualifying questions, and activate call forwarding. In just about five minutes, this system can help block spam calls, identify potential leads, and transfer calls smoothly - saving time and improving efficiency.

Summarize with AI

Related Posts

Best Voice AI for Fraud Detection Workflows: 2025 E-Commerce Security Guide

Explore how AI voice tools enhance fraud detection in e-commerce, combating threats like audio deepfakes and ensuring secure transactions.

How AI Detects Fraudulent Documents in Real Time

AI revolutionizes document verification, offering real-time fraud detection, improved accuracy, and scalable security for small businesses.

How AI Detects Fraud in Business Phone Systems

AI is revolutionizing fraud detection in business phone systems, providing real-time monitoring and advanced security against evolving threats.

KI Phishing Smartphone Scams: How to Recognize AI Phone Calls and Protect Yourself

Learn how to distinguish between AI-powered phone calls and real human interactions. Discover why it's important to be aware of AI technology in customer service and telemarketing calls.