How AI Tracks Context in Customer Conversations

Discover how modern AI remembers past conversations to deliver 47% faster resolutions and personalized customer experiences.

Written by

Adam Stewart

Key Points

- Use four-layer memory to track customer history beyond basic chat logs

- Apply sliding window techniques to balance context depth with response speed

- Build dialogue state tracking for consistent multi-turn conversations

- Measure success with resolution time and customer satisfaction scores

When you interact with AI systems, do you often feel like you're starting from scratch every time? That's because most AI models, like GPT-4, don't have memory - they handle each interaction independently. But context-aware AI changes the game. By simulating memory, it combines static data (like your account details) with real-time signals (like your location or device) to make conversations smoother and more personalized.

Key takeaways:

- Traditional AI lacks memory, leading to repetitive user experiences.

- Context-aware AI uses dialogue state tracking to remember user intent, past exchanges, and critical details.

- Techniques like sliding windows, hierarchical summarization, and dynamic context selection help manage memory efficiently.

- Systems like Dialzara use layered memory (working, session, episodic, and semantic) to ensure vital information is retained, even in long conversations.

- Businesses using context-aware AI report up to 47% faster resolution times and reduced costs.

Context tracking isn't just about remembering details - it’s about making interactions feel natural and efficient, saving time for both users and businesses.

How AI Tracks Context in Conversations

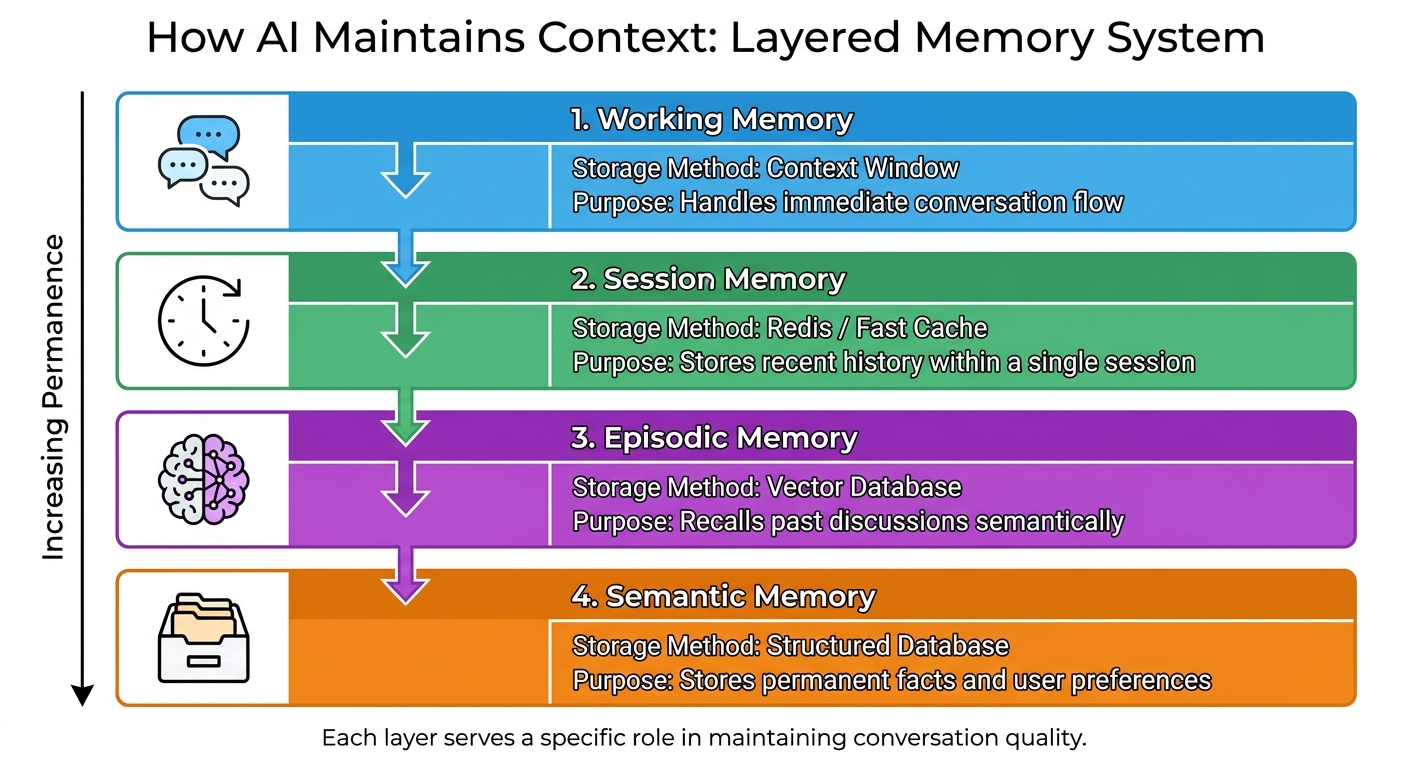

AI Memory Architecture: Four-Layer Context Tracking System

To understand how AI keeps track of context in conversations, it’s important to first grasp a key limitation: most modern AI models don’t have memory. Each interaction with systems like GPT-4 or Claude starts fresh unless developers specifically provide them with prior exchanges [4][7].

As one expert explains:

"AI models like GPT-4, Claude, or Llama are stateless. Every API call is independent - the model has no internal awareness of previous conversations unless you explicitly provide that context." [7]

This is where dialogue state tracking comes into play. Think of it like the AI’s working memory - it helps the system figure out what’s important in a conversation. It keeps tabs on what the user is trying to achieve, what’s already been covered, and what the system needs to remember to make the interaction smooth and coherent [1]. Without this, conversations with AI would feel disjointed and frustrating.

What Is Dialogue State Tracking?

Dialogue state tracking is how AI systems keep up with the flow of a conversation. It tracks things like user intent, past exchanges, and the system’s current state. For example, if someone starts a chat about a billing issue and then shifts to asking about delivery times, the AI has to recognize that change and respond appropriately.

To do this, the system organizes information into structured formats. Instead of storing vague notes like "user mentioned premium plan", it uses precise data points, such as:

plan_type: premium, inquiry: billing [7][2].

This structured approach makes it easier for the AI to retrieve and use information during the conversation.

However, AI systems have a limited "context window" - a set amount of information they can process at any given time [2][7]. Once the conversation exceeds this limit, the system has to prioritize what to keep and what to discard. Managing this balance is key to maintaining high-quality interactions.

Core Components of Dialogue State Tracking

A system’s ability to manage ongoing conversations relies on several interconnected elements. The conversation history acts as the backbone, often stored in a buffer that holds the last 10 to 20 exchanges [7]. This ensures the AI remembers recent details without overloading its processing capacity.

In real time, the AI updates its understanding of the user’s goals and emotional tone [5][6]. For instance, it can pick up on whether the user is frustrated or satisfied and adjust its responses accordingly. This adaptability makes interactions feel more human and less mechanical.

To maintain context over time, dialogue state tracking uses a layered memory system:

| Memory Type | Storage Method | Purpose |

|---|---|---|

| Working Memory | Context Window | Handles immediate conversation flow |

| Session Memory | Redis / Fast Cache | Stores recent history within a single session |

| Episodic Memory | Vector Database | Recalls past discussions semantically |

| Semantic Memory | Structured Database | Stores permanent facts and user preferences |

Each layer serves a specific role. For example, Working Memory focuses on the current interaction, while Semantic Memory ensures the AI remembers long-term details like a user’s preferences or frequently asked questions. Together, these layers improve response accuracy and reduce the time it takes to resolve user queries using personalized AI tools.

Finally, the system incorporates high-level directives - guidelines like "You are a helpful customer service assistant" - to maintain a consistent tone and personality throughout the conversation [2]. These directives, combined with the memory layers, create the impression of an attentive and reliable conversational partner.

sbb-itb-ef0082b

Methods for Preserving Context in AI Conversations

AI systems rely on several strategies to maintain coherent conversations while working within the constraints of token budgets and response speed. These methods ensure that interactions remain efficient, relevant, and responsive, even as conversations grow in complexity.

Sliding Window Approach

The sliding window method focuses on keeping the most recent exchanges visible to the AI, while older messages are removed. Since large language models (LLMs) don’t have built-in memory, developers simulate it by including only the last N messages in each new prompt[2]. This helps avoid exceeding the model's token limits, which can range from 4,000 to 128,000 tokens, depending on the model[2].

This approach is especially useful for real-time interactions, such as customer support chats or phone calls, where speed is critical. This is a core function of an AI virtual receptionist. By trimming older data, the system reduces latency, keeping response times quick. It also helps manage costs, as LLM providers often charge based on the number of tokens processed. Businesses using this method have seen resolution times drop by up to 47%[3].

The tricky part is choosing the right window size. A window that’s too small risks losing important details, while one that’s too large can hurt performance. Monitoring context length and trimming thoughtfully can help avoid issues like the AI generating off-topic or confusing responses[2].

Hierarchical Summarization

In contrast to the sliding window, hierarchical summarization preserves long-term conversation history by compressing older exchanges into concise summaries. Recent messages remain in full detail, while earlier parts are summarized to capture key points, such as a customer’s preferences or specific requests[2].

This method strikes a balance between retaining important details and staying within token limits. It’s particularly effective for maintaining continuity in lengthy conversations, without the need to replay the entire history.

To enhance accuracy, summaries can be combined with structured formats like JSON to record variables (e.g., plan_type: premium or inquiry: billing). If the summarized context becomes unclear, the AI can be trained to ask follow-up questions to clarify details[2].

Dynamic Context Selection

Dynamic context selection takes a more focused approach by identifying and retrieving only the most relevant parts of past conversations. This method is particularly useful when the topic of conversation shifts. For instance, if a customer moves from asking about billing to delivery times, the AI pulls up only the relevant snippets, even if unrelated messages were exchanged in between[1].

This strategy also integrates real-time signals, such as the customer’s location, device type, or current webpage. For example, if someone is browsing a returns page, the AI might proactively offer a return label[3]. Businesses in San Francisco using this approach have reported resolution time reductions of up to 40%[3].

Dynamic context selection often uses Retrieval-Augmented Generation (RAG) to extract specific, relevant details from external memory, keeping the active prompt size manageable[2]. Setting token usage thresholds - like 75% of the budget - can trigger context compression before hitting system limits. However, it’s essential to avoid including irrelevant data, as this can feel intrusive to users[3].

| Strategy | Best Use Case | Key Benefit |

|---|---|---|

| Sliding Window | Fast-paced, high-volume interactions | Reduces latency and costs; quicker responses |

| Hierarchical Summarization | Long conversations needing historical context | Maintains balance between detail and efficiency |

| Dynamic Context Selection | Multi-topic or proactive interactions | Retrieves relevant details for changing topics |

Dialzara: Using AI Context Tracking for Business

Dialzara has developed a smart approach to context tracking, designed to address the unique demands of small and medium-sized businesses (SMBs). Its system operates on four levels: System (always retained information), Pinned (critical details like customer names or account numbers), Recent (current conversation), and Historical (older messages that are dropped first when space runs low). This setup ensures that even during extended conversations - lasting up to 20 turns and covering 50,000 tokens - important information remains intact [1]. When a conversation nears 75% of its token limit, Dialzara activates hierarchical summarization, condensing older exchanges into concise 2–3 sentence summaries [1]. The system also uses dynamic topic tracking to classify messages (e.g., billing or technical support), which helps retrieve relevant historical context when the subject of the conversation shifts.

This meticulous context management allows Dialzara to craft responses that align with the specific terminology and operational needs of various industries.

Customized AI for Different Industries

Dialzara takes its context management a step further by tailoring AI responses for specific industries. Through persistent system prompts, it embeds industry-specific personas and language rules that stay active throughout conversations [1]. For example, in legal settings, the AI incorporates terms like "retainer" and "discovery", while in healthcare, it handles specialized phrases like "prior authorization" and "EOB" with precision. Modern AI agents can now process up to 1 million tokens, equivalent to roughly 750,000 words or several hundred pages of documentation [8]. Instead of relying solely on general-purpose models, businesses can deploy specialized agents that use Retrieval-Augmented Generation (RAG) databases for highly accurate, industry-specific knowledge [8]. When interacting with business databases or APIs, Dialzara condenses large datasets - such as summarizing 50 rows of order history into a single factual sentence - ensuring efficient management of the conversation's context [1].

Smooth Customer Interactions with AI Agents

Dialzara’s approach to managing token counts and pinning crucial business data ensures smooth, uninterrupted conversations [1]. This eliminates the need for customers to repeat themselves during multi-turn interactions. Key details like account numbers, customer names, and the current issue remain accessible throughout the conversation. This context-aware strategy allows Dialzara to handle complex, multi-step processes - such as scheduling appointments, processing payments, or conducting client intake - within a single, seamless interaction. Even when topics change mid-conversation, the AI retains earlier context, delivering a natural and efficient experience akin to speaking with a knowledgeable human assistant. For businesses managing high call volumes, this results in quicker resolutions, happier customers, and significant cost savings compared to traditional staffing models.

How Context-Aware AI Improves Customer Satisfaction

Context-aware AI is revolutionizing customer service by addressing one of the biggest frustrations with traditional AI: the lack of memory. Conventional systems often "forget" previous interactions, forcing customers to repeatedly explain their issues. This repetitive cycle is one of the main reasons users abandon chatbots and prefer speaking with human agents [7][2].

By leveraging dialogue state tracking and layered memory architectures, modern context-aware AI ensures that vital details - like a customer's name, account number, or ongoing issue - are retained throughout an interaction. This memory persists even during extended conversations spanning dozens of exchanges and thousands of tokens [1]. The result? A smoother, less frustrating experience that directly boosts customer satisfaction.

Reducing Repetition and Saving Time

One of the standout benefits of context-aware AI is its ability to eliminate repetitive questions. For instance, if a customer asks about billing and follows up minutes later, the AI can recall the earlier exchange without needing the customer to re-explain. By pinning key details and summarizing conversations, the system maintains continuity and preserves progress [1].

The impact of this efficiency is clear. While traditional linear truncation methods fail to preserve conversational coherence 78% of the time, semantic compression - where older exchanges are condensed into structured summaries - maintains coherence in 94% of cases [9]. For businesses managing high call volumes, this translates to faster resolutions and reduced costs.

Providing Personalized and Natural Responses

Context-aware AI goes beyond efficiency by delivering personalized interactions. By remembering details like a customer's preferences or industry, the system can tailor responses to meet their specific needs [7]. Advanced systems use vector databases to retrieve past conversations semantically, enabling the AI to recall relevant history based on meaning rather than exact keywords [7]. For example, if a customer mentions a budget constraint today, the AI might reference a pricing discussion from weeks earlier.

These systems also excel at handling implicit references. When a customer asks, "Is it available there?" the AI understands that "it" refers to a previously mentioned product and "there" to a discussed location [2][9]. This ability to resolve pronouns and maintain topic continuity makes interactions feel more natural - like speaking with a knowledgeable assistant who truly understands the customer.

"The agents that perform best aren't necessarily using the most sophisticated models. They're the ones with well-designed memory systems that make users feel understood and remembered." - Particula.tech [7]

Conclusion

Context-aware dialogue state tracking has reshaped how businesses engage with customers by addressing a major limitation of large language models: their lack of memory. Instead of repetitive, disconnected exchanges, these systems enable smooth, meaningful interactions that boost customer satisfaction.

The benefits for businesses are clear and measurable. By incorporating real-time context, companies can cut resolution times by 47%. Additionally, many have reported substantial cost savings after adopting context-aware AI systems [3]. These advancements translate directly into better experiences, as the AI retains past interactions and understands subtle cues or references.

"When customers see the value, and when every message a brand sends feels personalized, relevant, and contextual, then customers will engage more." - Chris Koehler, CMO, Twilio [3]

A strong technical foundation is key to achieving these results. Multi-layered memory systems, smart truncation techniques, periodic summarization, and effective data management ensure that these systems remain fast and reliable, even during lengthy conversations.

The business advantages are undeniable. Solutions like Dialzara demonstrate how AI can maintain conversational flow while adapting to the specialized language of different industries. Dialzara’s AI phone agents are a prime example, efficiently managing customer interactions and cutting costs by up to 90%. This technology delivers the kind of personalized, seamless service that today’s customers expect.

FAQs

How is “context” different from AI memory?

In AI systems, context is all about the here and now. It includes real-time details like customer intent, tone of voice, previous interactions, and situational factors that influence a conversation. This helps ensure responses are tailored and relevant at that exact moment.

On the other hand, AI memory is about the long game. It involves storing information from multiple interactions - like customer preferences or past conversations - to maintain consistency and improve future interactions. In short, context handles the present, while memory focuses on creating a seamless experience over time.

What should each memory layer (working, session, episodic, semantic) store?

In context-aware AI systems, working memory handles the ongoing conversation, keeping track of the immediate dialogue. Session memory preserves recent exchanges to maintain continuity during a single session. Episodic memory archives past interactions, allowing the system to offer more personalized responses over time. Meanwhile, semantic memory holds structured facts and user preferences, ensuring consistency across different sessions. These layers work together to create smooth, multi-turn conversations by combining real-time context with long-term knowledge.

How can I keep context without exceeding token limits or raising costs?

To streamline context management and cut down on token usage and costs, focus on summarizing conversations to capture key details rather than retaining the full history. Use retrieval-augmented generation (RAG) to pull in relevant information from external sources when needed. Additionally, prioritize remembering only essential elements, like customer preferences, and include these selectively in prompts to ensure coherence while keeping token usage minimal.

Summarize with AI

Related Posts

How Emotional Intelligence Works in AI Agents Today

Explore how AI agents use emotional intelligence to enhance interactions, improve customer experiences, and streamline business operations.

AI Customer Service: Future Trends

Explore how AI is transforming customer service with smarter interactions, predictive capabilities, and improved efficiency, enhancing customer satisfaction.

Voice Sentiment Analysis: 7 Techniques That Help AI Understand Emotions

Explore seven key techniques in voice AI sentiment analysis that enhance customer interactions by understanding emotions in real time.

How Context-Aware AI Enhances Customer Calls

Context-aware AI enhances customer service by personalizing interactions and improving efficiency through real-time understanding of customer needs.